Every run is a fully inspectable record.

Prompt Tornado logs every execution step — the model called, the provider used, duration per step, tokens consumed, cost, and the exact output. When something goes wrong, you have a ledger, not a guess.

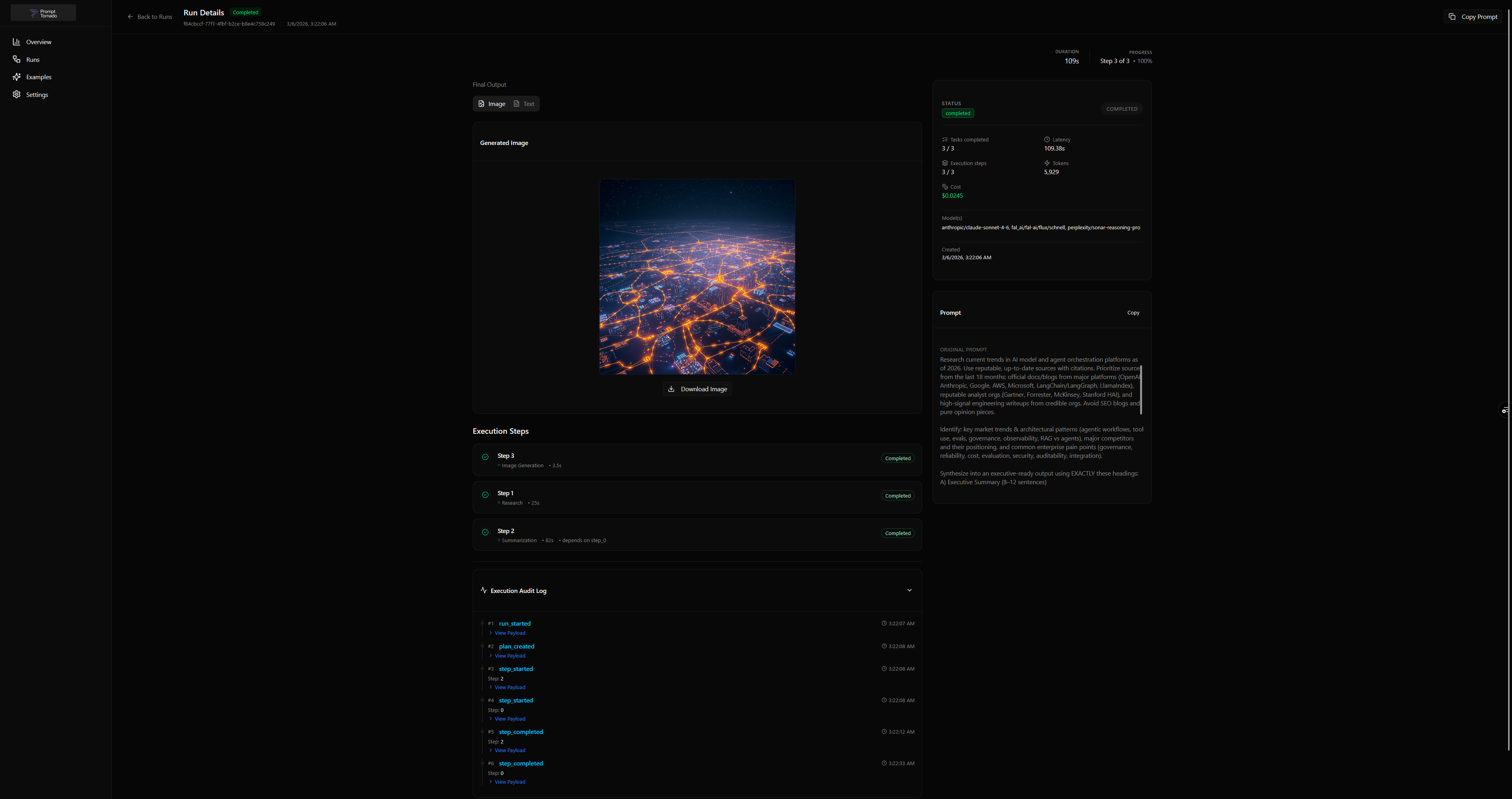

3 typed outputs — research briefing, executive summary, generated image — returned as a single result. Every field is traceable to the step and model that produced it.